Manus AI has recently emerged as a prominent general-purpose AI agent, designed to transform user intentions into actionable outcomes. Named after the Latin word for “hand,” Manus symbolizes its capability to execute tasks efficiently. Developed by the Monica.im team, Manus AI aims to bridge the gap between human thought and machine execution, offering a seamless experience akin to collaborating with a human colleague.

What Manus AI Can Do?

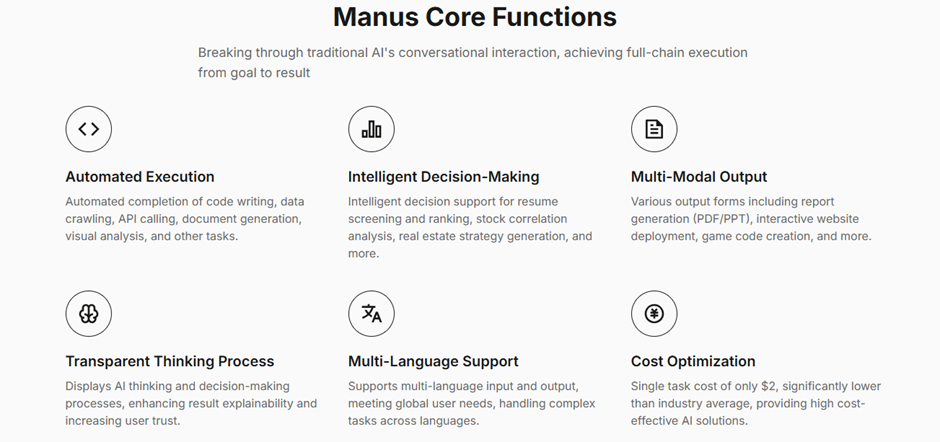

- Autonomous Planning and Execution: Manus AI stands out with its ability to independently decompose complex tasks into manageable steps, plan their execution, and deliver comprehensive results without continuous user intervention.

- Multi-Tool Integration: Unlike traditional chatbots that provide textual suggestions, Manus AI actively integrates with various tools, including browsers, code editors, and APIs, to automate tasks end-to-end. This integration allows it to perform actions such as data analysis, code development, and content creation seamlessly.

- Independent Operation: Operating within its secure computing environment, Manus AI can execute tasks autonomously, eliminating the need for constant user supervision and ensuring data security.

- Transparent Thinking Process: Manus AI enhances user trust by displaying its decision-making processes, offering transparency and explainability in its operations.

- Multi-Domain Efficiency: The AI agent excels in various domains, including data analysis, code development, content creation, research, travel planning, and document processing, showcasing its versatility across different industries.

The Technology Behind Manus

Despite its claims of autonomy, Manus AI does not use an original model. Instead, it leverages existing large language models like Anthropic’s Claude and Alibaba’s Qwen, refining them for specific tasks. This approach allows Manus to operate at a high level but also introduces certain limitations.

Performance and Benchmarking

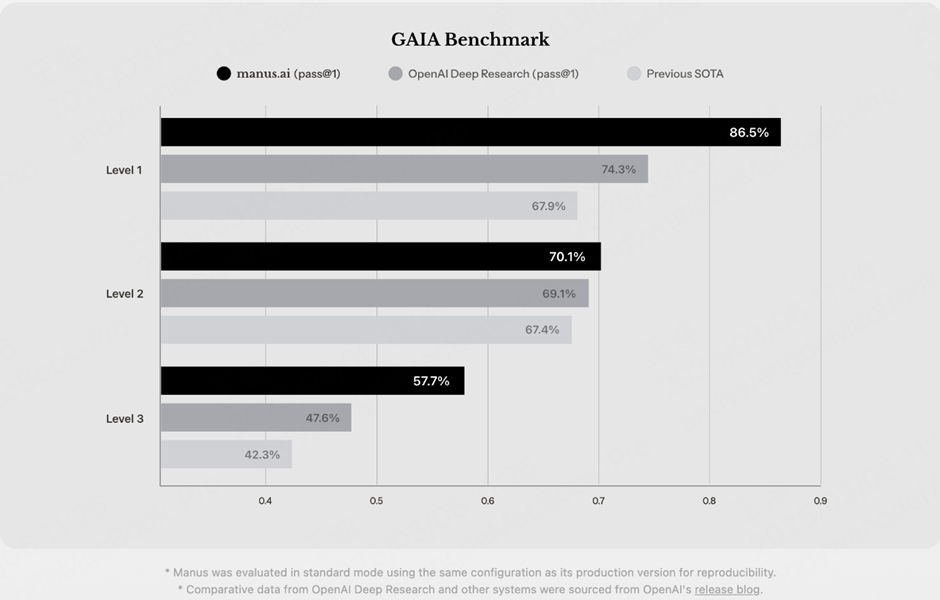

In the GAIA (General AI Agent) benchmark tests, Manus AI has achieved state-of-the-art performance across all three difficulty levels, significantly surpassing other AI assistants currently available. While humans score 92% on GAIA tests, GPT-4 with plugins scores 15%, and Manus AI’s performance significantly exceeds current advanced models.

For those who don’t know -

The GAIA (General AI Assistant) benchmark is a test used to measure how well AI systems handle real-world tasks. It checks an AI's ability to think critically, process different types of information (like text and images), browse the web, and use tools effectively. This benchmark helps researchers understand how close AI is to functioning like a human when solving complex problems.

Features of the GAIA Benchmark:

- Diverse Question Set: GAIA comprises 450 questions with unambiguous answers, categorized into three levels of difficulty. These queries are designed to test various aspects of an AI assistant’s functionality, from basic information retrieval to complex problem-solving that necessitates autonomous decision-making.

- Real-World Scenarios: The benchmark emphasizes practical tasks that are straightforward for humans but challenging for AI systems. For instance, questions may require the AI to browse the internet for information, interpret multi-modal data, or utilize specific tools to arrive at a solution.

- Human vs. AI Performance: In evaluations, human respondents have achieved a 92% success rate on GAIA tasks, whereas advanced AI models like GPT-4, even when equipped with plugins, have scored around 15%. This significant disparity highlights the current limitations of AI systems in replicating human-like reasoning and adaptability.

User Experiences and Testimonials

Users from various professional backgrounds have reported substantial improvements in efficiency and productivity with Manus AI:

- David Chen, Data Analyst: “Manus performs amazingly in handling complex data analysis tasks, saving me 80% of the time compared to doing this work manually, and the results are highly accurate.”

- Rachel Kim, HR Manager: “Using Manus to screen resumes is much more efficient than traditional methods. It can automatically evaluate candidate qualifications and generate rankings, greatly improving our recruitment efficiency.”

- Marcus Thompson, Independent Developer: “As a solo developer, Manus is like my virtual assistant, helping me handle code writing, data analysis, and other tasks, significantly improving my work efficiency.”

Where Manus AI Falls Short

While Manus AI has been praised for its innovative approach, early users have reported several issues:

Inconsistent Performance – Users, including AI startup founders and tech reviewers, have found Manus AI to be unreliable. Error messages and never-ending loops have come from tasks including booking flights, ordering food, and even programming projects..

- Misinformation and Overhype – Influential AI figures on social media have compared Manus to China’s DeepSeek, but the comparison lacks a solid foundation. Unlike DeepSeek, The Butterfly Effect has not developed its own AI models.

- Poor Execution of Tasks – Manus struggles with real-world execution. A viral test showed the AI failing to book flights or complete a food delivery order, even after multiple attempts.

- Exclusivity-Driven Hype – The scarcity of invites has fueled the perception that Manus AI is groundbreaking, but technical evaluations suggest it’s not as revolutionary as claimed.

The Future of Manus AI

Manus AI is still in its early access phase, and its developers acknowledge the need for improvements. The team is focused on scaling computing capacity and fixing known issues. However, given its reliance on existing AI models, it remains unclear whether Manus can truly redefine agentic AI or if it will fade as another overhyped tech product.

Manus AI has generated significant attention, but its real-world performance does not yet match its ambitious claims. While it has potential, it currently suffers from technical limitations, exaggerated marketing, and fundamental execution flaws. As more users test it, the coming months will determine whether Manus can evolve into a truly powerful AI agent or if it remains a case of hype exceeding reality.